CoTEVer: Chain of Thought Prompting Annotation Toolkit for Explanation Verification

Image credit: Unsplash

Image credit: UnsplashAbstract

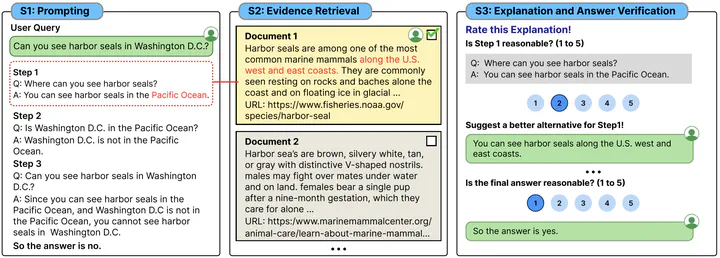

Chain-of-thought (CoT) prompting enables large language models (LLMs) to solve complex reasoning tasks by generating an explanation before the final prediction. Despite it’s promising ability, a critical downside of CoT prompting is that the performance is greatly affected by the factuality of the generated explanation. To improve the correctness of the explanations, fine-tuning language models with explanation data is needed. However, there exists only a few datasets that can be used for such approaches, and no data collection tool for building them. Thus, we introduce CoTEVer, a tool-kit for annotating the factual correctness of generated explanations and collecting revision data of wrong explanations. Furthermore, we suggest several use cases where the data collected with CoTEVer can be utilized for enhancing the faithfulness of explanations. Our toolkit is publicly available at https://github.com/SeungoneKim/CoTEVer.

Type

Publication

EACL 2023 Demo

Click the Cite button above to demo the feature to enable visitors to import publication metadata into their reference management software.

Create your slides in Markdown - click the Slides button to check out the example.

Supplementary notes can be added here, including code, math, and images.